Why do social networks offer right-wing extremists their platforms as a medium of communication? Critics have been asking this question for years. Now, a satisfactory answer is in sight, as several large networks are banning right-wing extremists.

It’s called “deplatforming” – and the strategy is being discussed by network providers, politicians, scientists, users and NGOs time and again. These discussions revolve around the question of freedom of opinion versus protection against digital attacks, or whether these large platforms actually lack an alternative when it comes to publishing: do we have a “right” to a Facebook or Twitter account, in order to participate in online discussions, even if we don’t adhere to community guidelines? Or does the principle “their house, their rules” apply to these big companies, allowing them to ban whatever they don’t like from their platform? The regulation of social media will be an important political issue in the coming years. At EU level, discussions on the “Digital Service Act”, which is to replace the more than 20-year-old “E-Commerce Directive”, are just beginning.

Digital house rights

As companies, social networks currently have “house rights”, which are published in their “community guidelines”. Discrimination, racism, antisemitism, Islamophobia and threats have been prohibited on all (large) platforms since day one. Anyone who violates these rules can expect to be banned from the network – at least in theory. In practice, social networks are full of breaches of these rules. Why? Did these platforms initially underestimate the far-right when it comes to their ideological evangelicism, their will to network, their delight in making threats? Were the online activities of the far-right met with a lack of expertise in moderation and community management? Did ignorance play a role, as the moderators and platform operators themselves are largely unaffected by online hate? Perhaps it was a misunderstood sense of freedom of speech, that sees it as legitimate to violate the freedom of others? And, last but not least, do these platforms in fact have a commercial interest in hate and conflict? After all, interaction – whatever the kind – spells big bucks for advertising.

Facebook lobbyist and former leader of the British Liberal Democrat party, Nick Clegg, denied the latter point in an article for the German newspaper FAZ: “I want to be unmistakable: Facebook does not profit from hate speech. Billions of people use Facebook and Instagram because they have had good experiences. They don’t want to see hateful content – just like our advertisers and ourselves. There’s no incentive for us to do anything other than remove that content.” The platform’s algorithms, however, tell a different story.

Since the mid-2000s, digital crimes and threats, as well as conspiracy to commit acts of violence and terrorism has gradually increased on these platforms – which companies are now tackling with more vigilance and success.

“Stop Hate for Profit”

Still, many right-wing extremists continue to remain on these platforms, not to mention the less overly explicit types of antisemites, conspiracy peddlers, racists or Islamophobes. In the USA, the “Anti Defamation League (ADL)” launched the campaign “Stop Hate for Profit” as a response. Following the idea that targeting business models will affect businesses, the ADL called on companies not to advertise with platforms, particularly Facebook, in July. Especially in the USA, the call was met with great approval. A total of 750 companies announced that they would be cutting back on advertising for a month to demonstrate their commitment in combating right-wing extremism and racism. Among them are well-known names like Coca-Cola, Unilever, Starbucks and Honda.

In the months following the death of George Floyd and the fresh wave of “Black Lives Matter” protests, many people in the USA have been asking a central question: “How can we tackle racism, which has been a part of our society for so long?” Fighting racism on social networks can be one of many steps. In Germany, too, several large companies have joined the campaign, including Lego, Volkswagen, SAP, Puma, Henkel, Fritz-Cola and Beiersdorf (see Zusammengebaut.de, Businessinsider, ZDF).

Whether this campaign has been successful or whether the networks came to their own realistation that a more decisive form of action is needed, remains to be seen. However, large social networks in the USA are currently outdoing each other when it comes to throwing spouters of hate off their platforms.

Active deplatforming

- According to Facebook, the platform has blocked hundreds of accounts associated with the far-right Boogaloo movement in the USA, a heavily armed group preparing to start a new civil war (which they call “Boogaloo”). This army sports the friendly attire of Hawaiian shirts, as they also refer to themselves as “big luau”, Hawaiian for “big festival”. Igloo flags (another name for the group is “big igloo”) as well as sarcastic memes, which have an increasingly large reach, are other hallmarks of the group (see ZDF). In total, around 320 accounts, more than 100 groups and 28 pages have been blocked, including 220 Facebook accounts and 95 Instagram accounts (see heise.de).

- In addition, 400 other groups and 100 pages were deleted that violated Facebook guidelines and distributed similar content to the far-right network. (see heise.de).

- Facebook took the alt-right website “Breitbart News” off its list of “trustworthy” sources that feed into its news service. The company is also ending its cooperation with the alt-right “fact-checking” organisation “The Daily Caller”, which had been the source of much criticism from the beginning (see Süddeutsche Zeitung).

YouTube

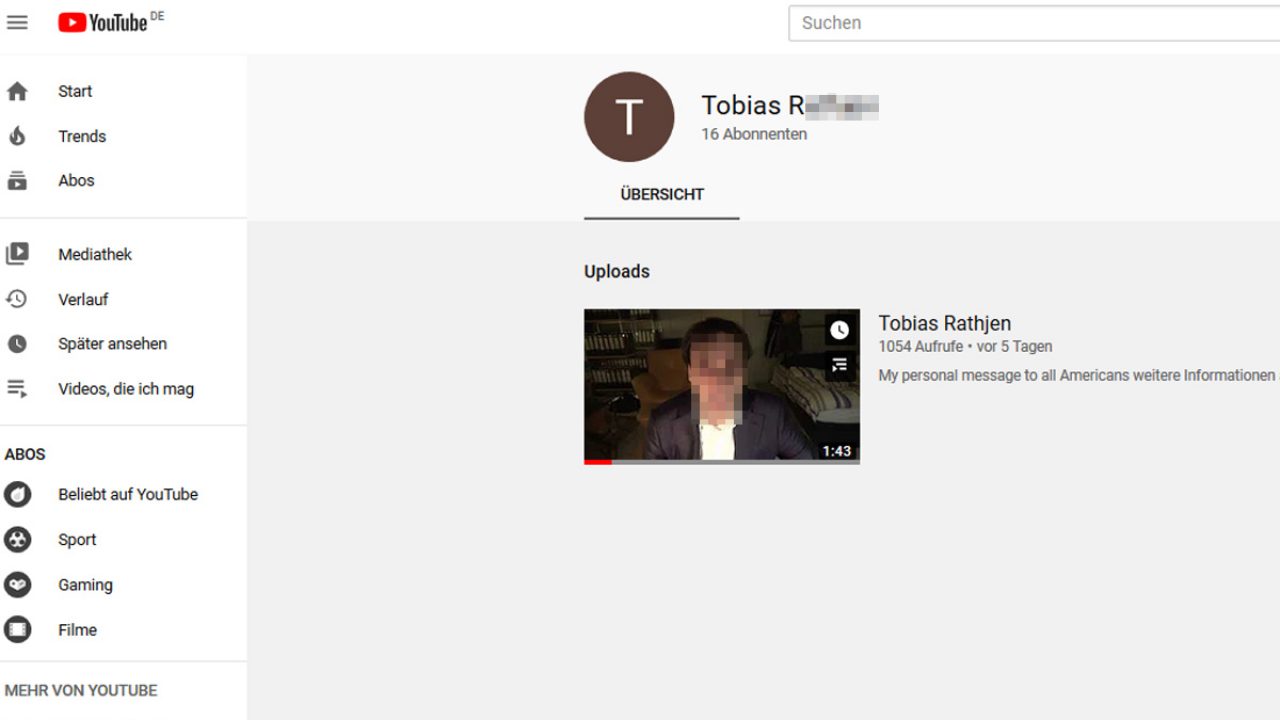

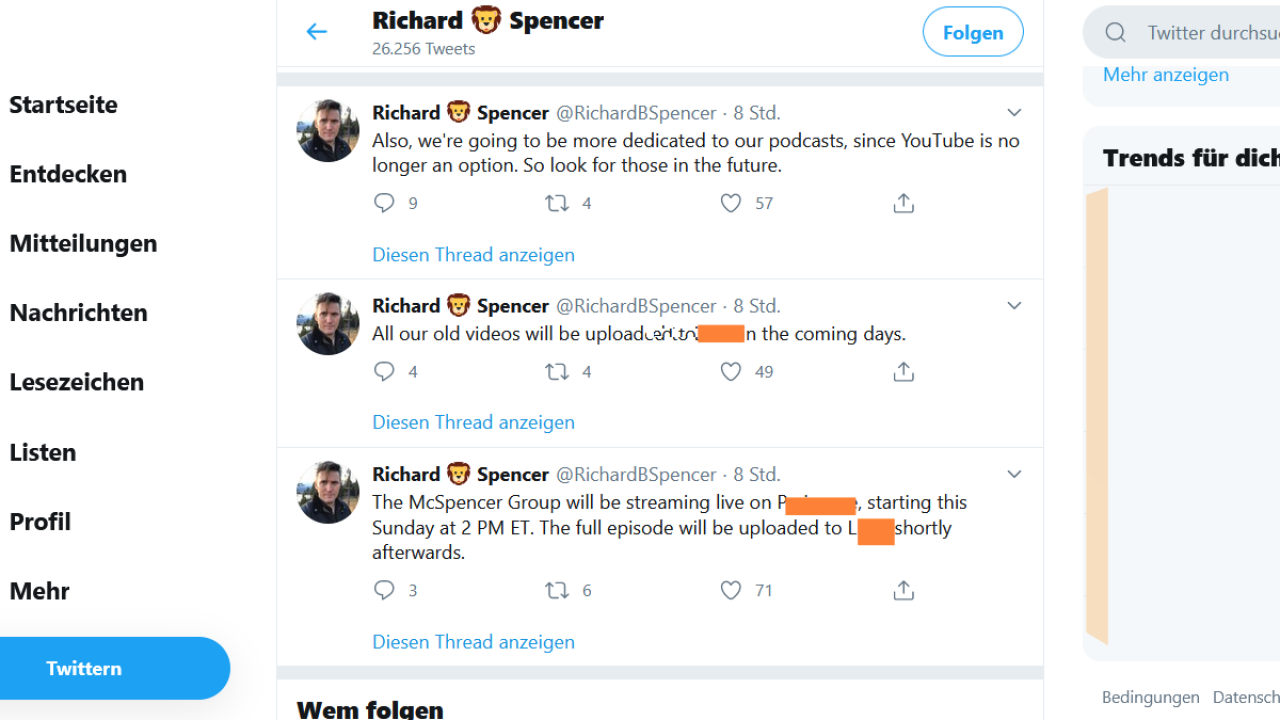

- YouTube has reportedly blocked 25,000 far-right and racist channels for spreading hate speech, including the channels of well-known American neo-nazis such as Richard Spencer and Stefan Molyneux (both from the alt-right, with more than 900,000 subscribers). “We have strict policies prohibiting hate speech on YouTube, and terminate any channel that repeatedly or egregiously violates those policies,” a YouTube spokesman told The Daily Beast. After updating its guidelines, the company has seen a five-fold increase in the removal of racist videos and has shut down more than 25,000 channels for breaching their guidelines on hate speech.

Twitch

- The streaming platform for gamers has deleted accounts that have harassed and abused women and transwomen on the platform in order to prevent another outbreak of misogyny such as “Gamergate”. This follows the appointment of an advisory board to make the online community safer (see Wired).

As the US election campaign gets underway, various social networks have decided not to back down from their community guidelines – even if the user is the President of the United States.

- In June, Twitter hid a tweet inciting violence by US president Donald Trump with a warning notice (see heise.de).

Snapchat

- Snapchat removed posts by Donald Trump from its “discover” section, which displays media content and news, among other things. The company “does not want to amplify voices that incite racial violence and injustice” (see heise.de).

- The online platform Reddit announced that it is taking new steps in the fight against hate speech and the glorification of violence. It closed the subreddit “The_Donald” (800,000 members, Trump’s “troll army” in 2016), popular with Trump fans, plus around 2,000 other subreddits. After George Floyd’s death, Reddit had announced changes to its community rules so that the platform could no longer be used as a means to counteract its own values (see heise.de).

Twitch

- The streaming platform blocked an account belonging to Trump’s campaign team used to show campaign events. The Amazon subsidiary Twitch justified the step by citing the US president’s “hate-fuelling behaviour”. The main issue is said to have been two statements during his election campaign events, which incited violence against Mexicans (see heise.de). In that respect, Twitch, which belongs to the Amazon corporation, is the first platform to actually ban Trump (see netzpolitik.org).

What does deplatforming achieve?

An interesting question remains: Is it societally beneficial to throw right-wing extremist accounts off platforms? One website with more experience has already gone a step further:

Discord

- The gaming platform has had a positive experience with. When the providers realised that their network was being used by the alt-right (as well as German right-wing extremists) to organise hate campaigns and threats (after the Charlottesville demonstration in 2017), they deleted 100 far-right groups from the platform, but also invested in more specialised staff to work in content moderation. The network started monitoring racist “white supremacy” groups to remove them from the site. “Discord” uses metadata, not just IP addresses, to ensure that banned users cannot sign up again – at least not that easily. Reporting users has been simplified and automatic speech recognition scans the platform for offensive language. The result: the “alt-right” left the platform – in favour of Telegram (see Forbes).

As the big social networks are based in the USA, such developments will hopefully also send a positive signal to the domestic market.

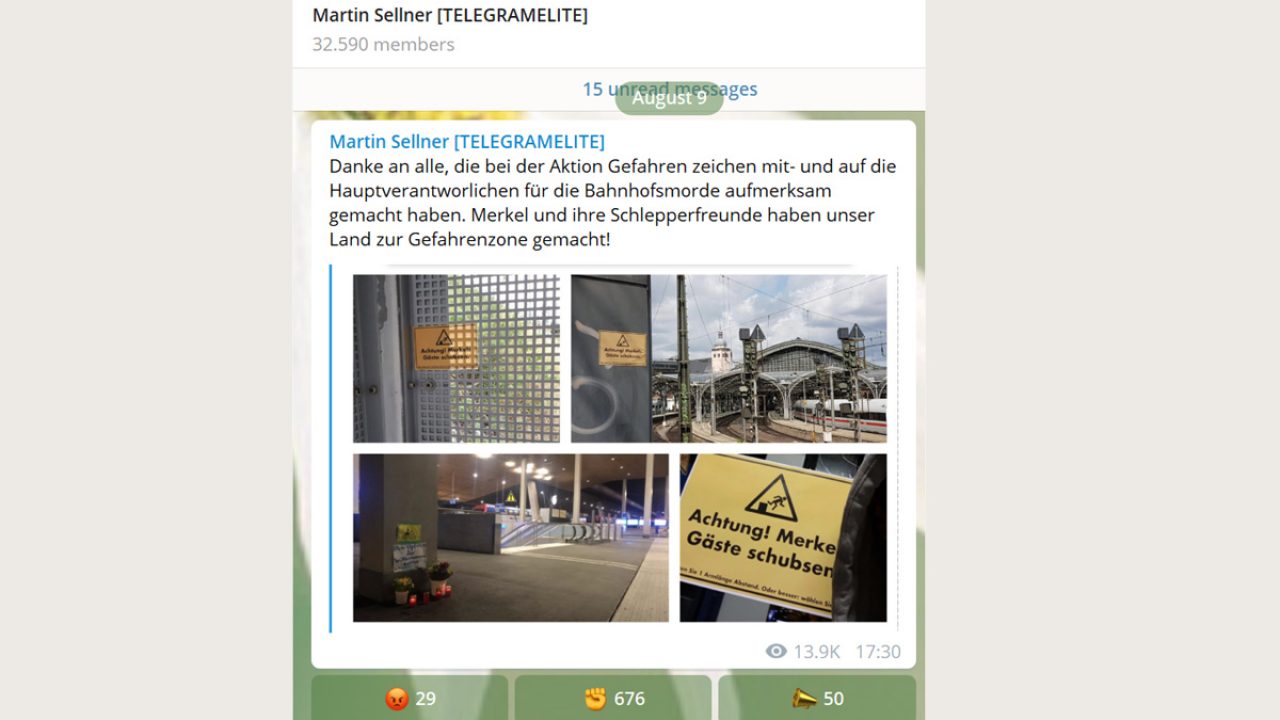

Deplatforming, however, has so far remained a cat and mouse game. The “Volkslehrer” (People’s Teacher), for example, a right-wing extremist who has already been banned from YouTube on several occasions, has been trying to return to the wide-reaching platform on a regular basis. The “Identarian Movement” is attempting to regain its foothold on Instagram with accounts that are not so obviously branded. As a result, we need a permanent, not a selective, vigilance to ensure success. However, one thing above all is crystal clear: with every ban, the far-right loose followers.

“This has a positive effect in limiting the reach of far-right accounts. On the other hand, devout supporters switch to smaller platforms and often radicalise themselves there,” says Miro Dittrich, monitoring expert at the Amadeu Antonio Foundation. “Deplatforming sends a public signal, but doesn’t provide an answer. These people and their hatred won’t simply disappear. That’s only possible if we deal with the problem on a thematic level too.”

Joan Donovan writes in Wired: “Without a strategy for how to curate content, tech companies will always be one step behind media manipulators, disinformers, and purveyors of hate and fear…We can’t let the gains from this great expulsion dissipate as political pressure mounts on tech companies to enforce a false neutrality about racist and misogynist content…Truth needs an advocate and it should come in the form of an enormous flock of librarians descending on Silicon Valley to create the internet we deserve, an information ecosystem that serves the people.” This should be as open as possible, but also ensure that all democratic voices are protected and amplified. Platforms should direct their efforts not towards stoking hatred and profitising it, but towards providing helpful content. Donovan is convinced that tech companies that fail to understand this will soon become obsolete.