Facebook Inc. – now Meta – is a US company headquartered in Menlo Park, California. The company owns the social network Facebook, the video and photo sharing app Instagram, the messenger WhatsApp, and Oculus VR, a manufacturer of virtual reality hardware. This section focuses on Facebook as a social network, which is designed for networking with friends, acquaintances, colleagues and like-interested people. In Europe, Facebook has a subsidiary in Dublin, Ireland, which means that European users enter into a contract with this company that falls under EU law and legislation. In 2021, Facebook had 420 million users in Europe (2.9 billion worldwide) and 309 million daily active members in Europe. There is evidence that in some regions, Facebook is becoming a platform for middle-aged people. In Germany, for example, 75% of people aged 30 to

39 use Facebook, but only 32% of people aged 16 to 19.

On Facebook, people network directly with each other, but they can also subscribe to pages or profiles and join public or private groups, so there are different forms of publicity on the platform. Although Facebook emphasises personal interactions, the platform is also used a lot for professional accounts, as a media information source and by parties and institutions. Facebook has repeatedly tried to restrict this non-private content. In August 2021, Facebook claimed that a test run in Canada, Brazil, Indonesia and parts of the US had shown that users would like to see less political content in their news feed. Currently, the share of political content in the news feed in the US is about 6%, but that would still be considered too much. Further testing will now be enrolled in Costa Rica, Sweden, Spain and Ireland. In practical terms, the company points out, this means that less political content will be shown in the news feed – which will also apply to journalistic media content. This could be a problem, because a reduction of reliable,

professional news contributions in the news feed will help disinformation to spread. Facebook is also not transparent about their criteria for classifying political content. Undoubtedly Facebook is able to enforce such subdivisions: the company earns its money with advertising for selected targeted audiences, a practice for which it has repeatedly come under criticism. Examples include the Cambridge Analytica scandal, with its micro-targeting of the Trump-supporting company (2018), and the fact that until 2017 the company allowed advertisers to select antisemites as a target group, i.e. those who had specified “Jew haters”, “How to burn Jews” or “Why Jews ruin the world” as “interests”.

Hate Speech and radicalization on Facebook

Facebook started in 2004 with a first-amendment ‘free speech’ approach that allowed virtually all expressions of opinion on the platform, even though there were already community guidelines that theoretically prohibited calls for violence and discrimination on platform. However, these guidelines were not properly implemented at the time. Since then, the platform has not only grown continuously, but also worked on its community standards,89 which vary from country to country in the worldwide network, in accordance with different legal situations and social standards. As a result, calls for violence90 and hate speech are forbidden on the platform, as are dangerous organisations, which also includes hate organisations.

Facebook used this policy to remove far-right terrorist groups from the platform, but have also applied it to a wider array of far-right organisations, including Generation Identity, thus depriving the Europe-wide group of a great deal of reach and networking. One supporter of Generation Identity had been the Islamophobic attacker from Christchurch, who referred positively to the far-right conspiracy narrations of the group. However, the policy changes came when the effect of Facebook as a radicalisation machine could no longer be dismissed. In the most extreme case in 2017, unmoderated hatred against the Rohingya minority group in Myanmar, posted not only by ordinary users but by military accounts, led to real violence and displacement inflicted on the Rohingya. After that, Facebook also introduced a global ‘Oversight Board’ of experts to discuss new problematic

content areas and make recommendations to the company.

Nevertheless, the sheer volume of content posted daily does not yet allow for the removal of hate content without reports from users. The work on community standards is an ongoing process. The definitions of dangerous organisations and protected groups need to be updated again and again. Practice shows that technical filtering systems, which are also used, can still be relatively easily outwitted. Facebook’s moderation teams have become better staffed and better trained over the years, but still a lot of misjudgement occurs: for example, cases of monitoring accounts documenting antisemitic hate have been blocked, while the original antisemitic hate content is left standing.

On the other hand, the deplatforming of hate organisations in Germany shows results, as a study by the Institute for Democracy and Civil Society (IDZ) Jena 2020 states: Facebook has increasingly lost its role among hate actors as a central mobilisation platform through deplatforming. It is now used by most actors as an interface to share links or to comment on posts on other sites. On the other hand there are still many discussions and networking taking place in closed groups.

Hate content on Facebook can be spread via text, images and videos, inscribed in group names and used as hashtags – although the latter play a less significant role on Facebook than on Twitter, Instagram or TikTok. However, as a network with mass impact and reach, most of the dissemination of discrimination and hate is found in the comment sections of pages with a high user numbers. Antisemites use Facebook strategically, for the dissemination and normalisation of their ideology.

Antisemitism on Facebook

Linguistic research has pointed out that antisemitism unites different hate groups. Far-right, Islamist or left-wing antisemitism often uses similar and very traditional, classical antisemitic narrations and images (around 54% of antisemitism expressed on the internet uses classical narratives). This can be easily observed in a general interest network like Facebook: classical antisemitism, conspiracy ideologies, post-Holocaust antisemitism and Israel-related antisemitism are spread by various extremist target groups, but also by ‘average’ users as well. Antisemitic stereotypes that collectively ascribe fictitious characteristics to Jews have been socially learned over 2000 years, and are inscribed in the culture of Europe. They are also constantly adapting to new circumstances – as, for example, the classical stereotype of well-poisoning was renewed at the outbreak of the COVID-19 pandemic, claiming that Jews invented, or are using, the virus.

Antisemitic stereotypes are enormously reinvigorated by their constant repetition and refreshment on social media. Long-term linguistic studies found a tripling of antisemitic comments from 2007 to 2017: the number of antisemitic comments on selected German media channels on Facebook increased from 7.5 to 30.1% of the whole volume of comments.

On Facebook there are all forms of hostility towards Jews, from religiously based anti-Judaism, racist antisemitism, anti-modern antisemitism (which alleges that Jews are promoting ‘modern’ ideas like equality, democracy, liberalism or feminism), post-Holocaust antisemitism (that is antisemitism referring to the Holocaust, like Holocaust denial, or claiming that Jews today take advantage of the Holocaust and such), to Israel-related antisemitism.

Motifs of classical anti-Judaism remain central: Jews as foreigners, as usurers and money men, as vengeful and power-seeking people, as murderers, ritual and blood cult practitioners, land robbers, destroyers and conspirators. However, the most widespread form of antisemitism online is Israel-related antisemitism, which in studies accounts for around 33.35% of total widespread antisemitism.

Fantasies of extermination against Jews, Holocaust denial and antisemitic hatred can be found on neo-Nazi and Islamist Facebook pages, but also on pages simply titled “Fuck Israel”. They can be found on the channels of pandemic denialist groups and conspiracy ideologues. However, antisemitism is particularly powerful and widespread in the comment sections of mainstream media pages on Facebook. This phenomenon is analysed below.

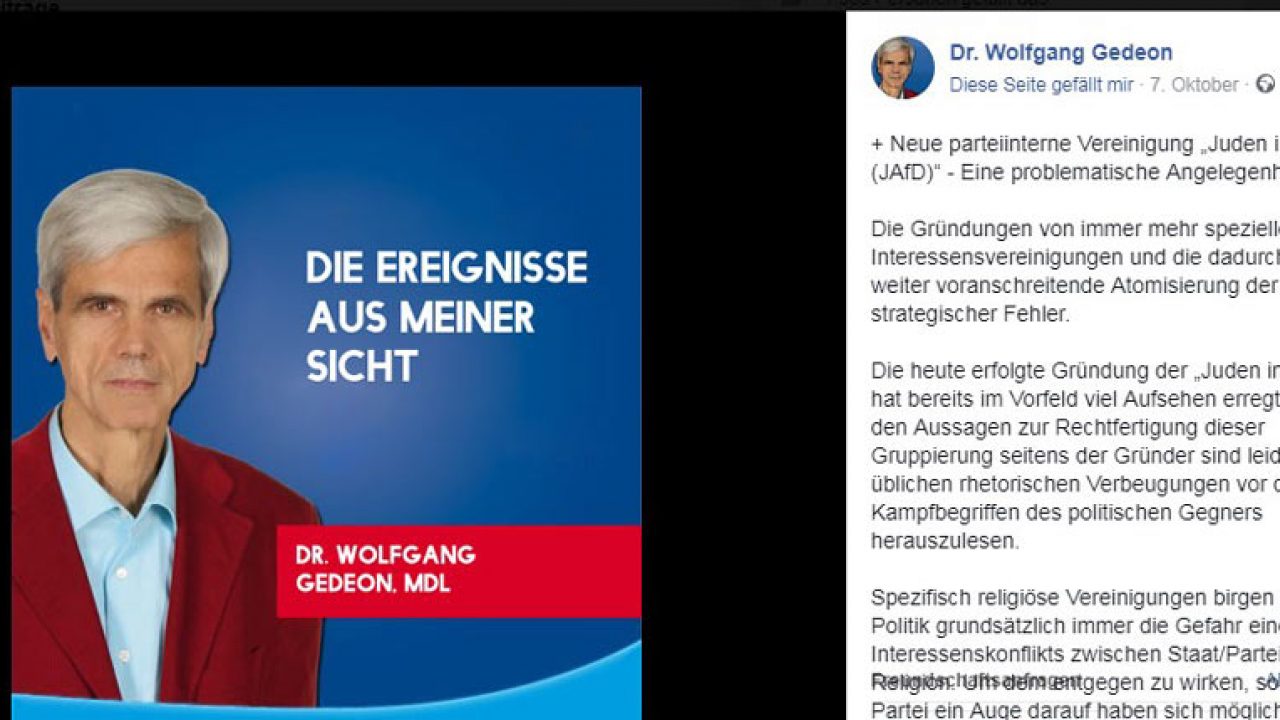

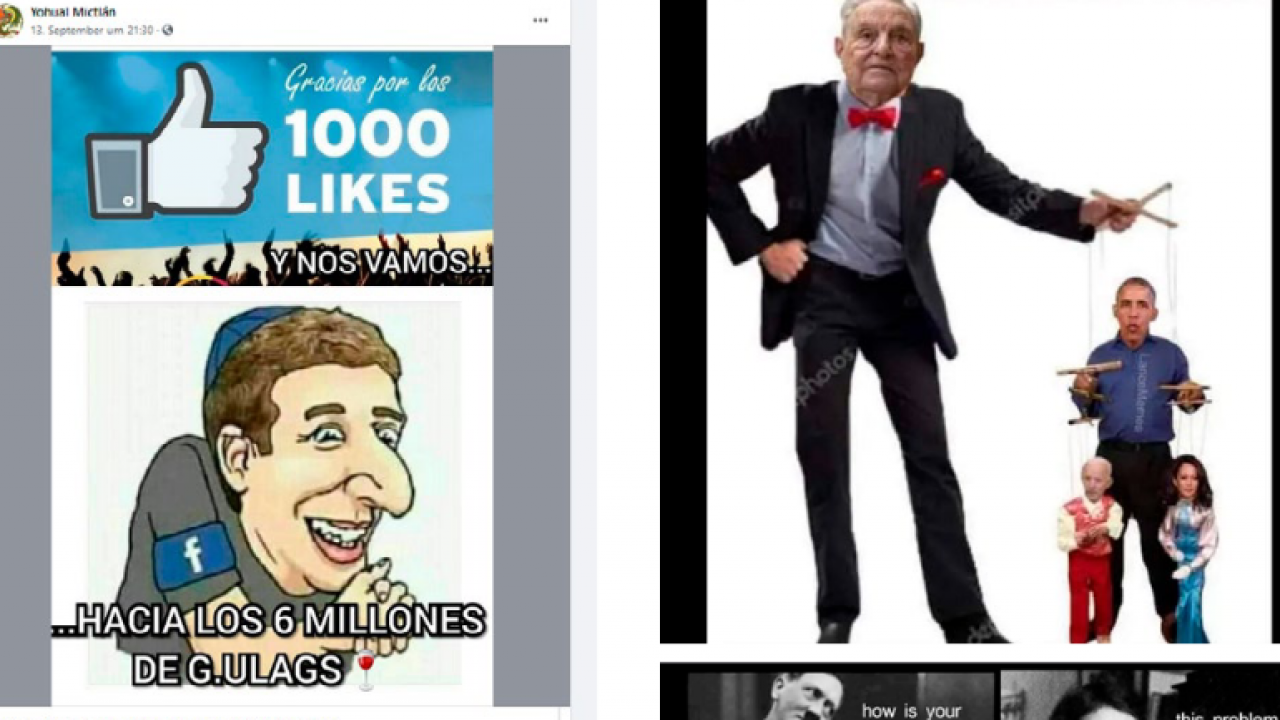

Practically, racist caricatures, photo montages and memes about alleged conspiracy connections are shared in groups and on private profiles. Manipulated facts and documents taken out of their historical context are shared to prove that the Holocaust is a “fairy tale of lies” – either as a “cult of guilt” (Schuldkult) to “keep the German people down” (neo-Nazis) or to deprive Jews of their victim status and show them as aggressors (Islamists). Jewish families are blamed for world events as a cipher for the Jewish people as a whole, often used synonymously with an imagined “Jewish world conspiracy”. Insiders understand these dog whistles immediately, but insufficiently trained moderation teams often do not, so these contributions remain on the page.

Facebook’s Community Guidelines

Antisemitic conspiracy ideologies remained the most “successful” on the platform: 89% of the reported posts remained on the platform. Only in 10.9% of the total reported posts did Facebook take any actions at all. 3.9% of posts were removed, 6.2% of accounts were closed (reaction leader YouTube reacted in 21% of reported examples). 109 Overall, reported calls for violence (30.7%), antisemitic cartoons (29.8%) and neo-Nazi content (29.3%) received the most reaction from the network, but also only in an average of 25% of reported cases.

In contrast, Facebook’s cooperation with selected civil society partner organisations has proven to be more effective. In Germany, for example, Facebook has been working for many years with the semi-governmental organisation Jugendschutz.net, which reports hate postings according to German youth protection standards. Accordingly, Jugendschutz.net publishes more encouraging figures in a publication on antisemitism online in 2019: when Jugendschutz.net as a trusted partner reported cases with their direct contact to the provider, 88% of the reported cases on Facebook were deleted or blocked for Germany, including sedition, Holocaust denial, racist depictions and depiction of symbols of unconstitutional organisations.

However, Jugendschutz.net also notes that much antisemitic content remained below the limits of a violation relevant to the protection of minors or punishable by law, and are accordingly often not deleted or hidden.

Holocaust denial on Facebook

Educational hints are shown with every search, but it is not to difficult to find Holocaust denial on Facebook – and it sometimes still not removed even after reporting. A study by the Center for Countering Digital Hatred (CCDH), in which researchers reported antisemitic posts on networks to document reactions, found that six months after the announcement, 80% of reported posts containing Holocaust denial or trivialisation remained on the platform after being reported.

One case of outright Holocaust denial, spread by sharing an article from a well-known far-right website and with an antisemitic photo montage, was only given a ‘clarification’ label instead of removing the post. It thus reached 246,000 likes, shares and comments. Often Holocaust denial still stays online if people do not use the word ‘Holocaust’, but instead uses phrases such as “the 6 million are also a lie”. Also Holocaust trivilalisation is often conveyed through memes and pictures, sometimes dubbed the ‘lolocaust’.

Antisemitism in comment sections

Antisemitic comments can be traced from hip-hop channels to climate change debates to student support communities. They do occur without a specific context, but there are also clear ‘trigger’ topics such as Middle Eastern conflict, terrorist attacks, historical topics (especially World War II), and Jewish topics or expressions of solidarity with Jews that lead to a particularly high number of antisemitic comments. The authors are people from all milieus who consider themselves to belong to the ‘middle’ of society and would deny belonging to radical milieus.

Antisemitism in Facebook comment sections looks partly, but not completely, different in different European countries, as the study ‘Decoding Antisemitism’ by the Alfred Landecker Foundation (August 2021) showed. The study is an analysis of a selection of comments from Facebook profiles of leading media in Great Britain, France and Germany. It shows that in the UK, there are about twice as many antisemitic statements in the comment sections of the coverage of the Middle East conflict as in the other two countries (UK: 26.9% of the 1,500 comments analysed, Germany: 13.6%, France: 12.6%). The situation was similar in the second study example, the coverage of the launch of the vaccination campaign in Israel: 17% of antisemitic comments in the Facebook comment sections of British media, 7.5 % in France and 3.4 % in Germany.

Instead, examples of Israel’s supposed amorality dominated in the UK. Arguments of guilt defence and a supposed taboo against criticising Jews and Israel are quite exclusively German. Most users showed their antisemitism openly, only rarely in code; many appear to have had the impression that they were not expressing problematic attitudes. Indecisive moderation, or lack thereof, reinforces this impression.

Conclusions

Attention to the issue of digital violence on the platform has risen sharply in recent years – through civil society and academic research and demands, but also through legislative measures such as the Network Enforcement Act against hate speech (NetzDG) in Germany. The NetzDG demands a timely deletion of hate speech relevant under criminal law. If hate content is repeatedly not deleted within a defined span of time, severe fines are applied.

This increases the number of discriminatory messages deleted and improves the knowledge of the moderation teams. Nevertheless, there is still a lot of antisemitic hatred present on the platform, and it is obvious that there are still a lot of measures that can be taken, starting with the more precise training of the moderation teams, which is apparently still particularly necessary for antisemitic conspiracy narrations, which are explicitly banned by the community standards but are often not deleted even when reported.

Antisemitic hashtags and keywords should be removed from suggestion algorithms in order to at least prevent their automated dissemination, of course knowing that antisemites are creative and that this would have to be updated regularly. When it comes to antisemitism in the comment

sections, it is not only Facebook that has a duty, but also the operators of large Facebook pages or groups, who must moderate more consistently.

Here, an obligation to moderate groups, sites and channels from a certain number of members could be considered. Last but not least, groups or persons who have been reported several times for spreading antisemitic hate should be excluded from the platform.

This text ist an excerpt and can be read with illustrations and footnotes in:

Antisemitism in the Digital Age

Online Antisemitic Hate, Holocaust Denial, Conspiracy Ideologies and Terrorism in Europe

A Collaborative Research Report by Amadeu Antonio Foundation, Expo Foundation and HOPE not hate

2021

Contents:

- Executive Summary

- The Report in Numbers

- Introduction

- Conspiracy Ideologies, COVID-19 and Antisemitism

- Superconspiracies: QAnon and the New World Order

- Case study: Path of radicalisation into antisemitism

- The Changing Nature of Holocaust Denial in the Digital Age

- Case Studies of Antisemitism on Social Media:

- Parler

- Telegram

- TikTok

- YouTube

- “4chan /pol/”

- Glossary of Antisemitic Terms

- Learnings from Project

Download the report as a PDF here: